Global Privacy Watchdog Compliance Digest: March 2026 Edition

- christopherstevens3

- Apr 7

- 35 min read

Updated: Apr 10

📰 From the Editor: March 2026

March 2026 reflects a subtle yet consequential shift in how accountability is understood across artificial intelligence (AI) governance, data privacy, and data protection. For years, organizations have been evaluated on what they could document, describe, and explain. That model is now under pressure.

Across jurisdictions, regulators are increasingly asking a different set of questions. They are no longer satisfied with evidence that someone designed the system responsibly or considered the risks at the time of deployment. Instead, they are examining how systems function in practice and how risks emerge over time. They are determining whether organizations retain meaningful control over the outcomes produced by those systems.

This shift does not solely result from new legal and regulatory principles. It is driven by the operational reality of modern systems. AI does not operate in discrete moments. It operates continuously, adapting to new data and generating outputs at scale. It can influence decisions in ways that are not always visible at a single point. As a result, accountability can no longer be anchored solely to individual decisions. It must extend to system operation itself.

What emerges is a new standard. Documentation remains necessary, but it is no longer sufficient. Governance must be demonstrable and explainable in practice. It must show that systems were observable, constrained, and capable of intervention as they operated. In this environment, accountability is no longer inferred from intent. It is established through evidence of control.

The implications are immediate. AI governance, data privacy, and data protection professionals must reconsider how compliance is designed and evaluated. Impact assessments, policies, and controls must include mechanisms that reflect how systems behave under real-world conditions. The question is no longer whether a system was compliant from the moment it was deployed. It is about whether it remained governable, explainable, and trustworthy throughout its life cycle.

This month’s topic explores this transition in depth. It examines how autonomous systems challenge traditional models of accountability and why governance must evolve from periodic review to continuous oversight. More importantly, it asks a question that will define the next phase of enforcement: If a system produces an outcome that should not have occurred, can the organization demonstrate that it had the ability to prevent it? That question will increasingly shape not only regulatory expectations but also the credibility of governance itself.

Respectfully,

Christopher L Stevens

Editor, Global Privacy Watchdog Compliance Digest

__________________________________________________________________________________

🌍 Topic of the Month: From Transparency to Proof: The New Evidence Standard in Global Data Protection Enforcement

✨ Introduction

Global artificial intelligence (AI) governance, data privacy, and data protection have entered a phase where documentation alone no longer satisfies accountability. Across jurisdictions, regulators are increasingly evaluating whether organizations can produce contemporaneous, operational evidence demonstrating that personal data processing was lawful, fair, and subject to effective safeguards. This evidentiary standard has developed in parallel with the rapid deployment of AI systems capable of adaptive and autonomous behavior. These systems do not operate solely through discrete and human-directed decisions. Instead, they generate output continuously, which is influenced by evolving data inputs, model adjustments, and environmental conditions. As a result, the foundational assumption that accountability can be established by reconstructing individual decisions is becoming less reliable.

This article advances a central proposition. In environments where systems act continuously and adapt over time, accountability must extend beyond retrospective explanation. It must include the organization's ability to show that system performance was visible, limited, and open to effective intervention at all times. For AI governance, data privacy, and data protection professionals, this shift has direct implications. It affects how the lawful basis is maintained over time and how data subject rights are enabled in practice. Moreover, it affects how fairness and bias are assessed and how organizations prepare for legal and regulatory scrutiny. The question is no longer limited to what occurred. It increasingly includes whether the organization retained sufficient control over what could occur.

📖 Key Terms

A clear conceptual framework is necessary to understand how autonomous systems alter the object of governance. Traditional data privacy and data protection terminology focuses on data, processing activities, and decisions. Autonomous systems introduce a different focal point: system behavior across time.

Table 1 presents the terms below as governance constructs rather than abstract definitions. Each reflects a dimension of accountability that must now be operationalized in practice.

Table 1: Core Terms Framing Autonomous AI Governance

Term | Definition | Governance Relevance |

Autonomous Decision Systems | Systems capable of initiating actions without discrete human instruction | Challenges to decision-based accountability models |

Behavioral Drift | The divergence of system outputs from validated conditions or original design intent over time | Indicates degradation of compliance after deployment |

Continuous Accountability | The ability to demonstrate system behavior and governance effectiveness throughout the operational lifecycle | Enables defensibility under regulatory scrutiny |

Control Plane Governance | Real-time monitoring, constraint, and intervention mechanisms applied to system behavior | Translates governance into operational control |

Intervention Traceability | The ability to demonstrate when oversight occurred, when it did not occur, and how it influenced outcomes | Critical for demonstrating accountability in contested cases |

Source Note: These constructs reflect lifecycle governance and risk management principles articulated in contemporary AI oversight frameworks (National Institute of Standards and Technology, 2023; Organization for Economic Co-operation and Development (OECD), 2024; OECD, 2022; Papagiannidis et al., 2025).

🔍 The Shift from Decisions to Behavior

Modern data privacy and data protection frameworks have historically been structured around discrete decision-making events. Legal obligations, such as lawful-basis determination, fairness assessment, and data-subject rights adjudication, are typically evaluated at identifiable points. This model becomes increasingly strained in the context of autonomous and adaptive systems.

Figure 1 contrasts two scenarios using the same underlying AI capability. On the left, siloed data sources limit the system’s ability to contextualize user activity, resulting in outputs that are technically correct but operationally irrelevant. On the right, a unified data environment enables the system to generate outputs that align more closely with user behavior and business objectives. The critical insight is not the improvement in personalization. It is the causal relationship between data architecture and system behavior.

The same model, operating under different data conditions, produces different outcomes. This has direct implications for governance:

Accountability must extend to data architecture decisions, not just model design.

Fairness and relevance are contingent on data completeness and integration.

System operation cannot be evaluated independently of data inputs.

Figure 1: Data Architecture as a Determinant of System Behavior

Source Note: Figure adapted to illustrate the impact of data architecture and integration on AI system outputs and governance outcomes (National Institute of Standards and Technology, 2023; Organization for Economic Co-operation and Development, 2024, 2022).

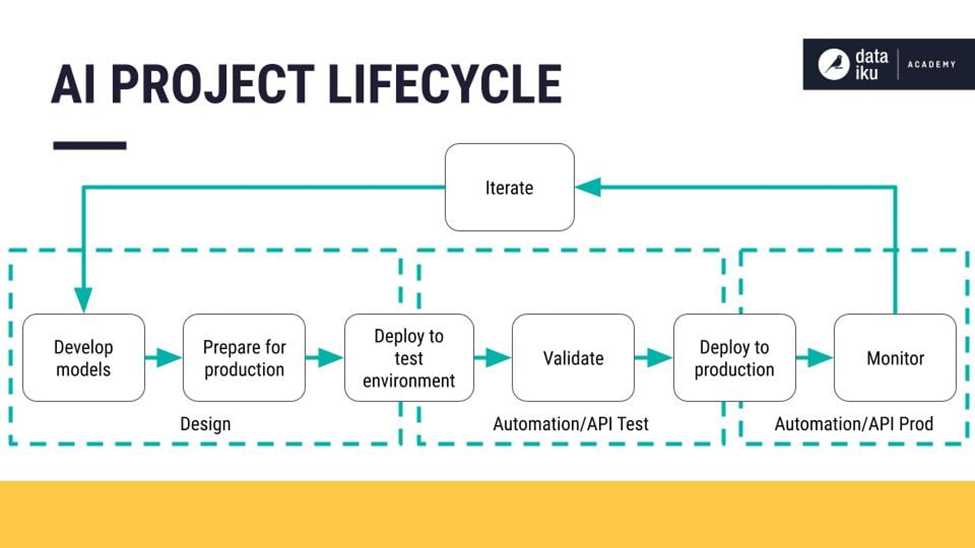

Figure 2 should be understood as more than a representation of the machine learning lifecycle. It highlights how governance is typically distributed across discrete stages, with controls applied at specific checkpoints rather than continuously. The critical insight is structural: Governance is applied at stages, while risk develops between them.

This creates a gap in which system activity can evolve without direct oversight. As systems move from one phase to another, they undergo various changes. The assumptions made during earlier stages may no longer hold, yet there is no mechanism to detect or address that change in real time. Figure 2, therefore, identifies the point at which lifecycle-based governance becomes insufficient.

Figure 2: The Machine Learning Lifecycle and Points of Governance Fragmentation

Source Note: Figure adapted to illustrate the machine learning lifecycle and associated governance considerations across development, deployment, and monitoring stages (National Institute of Standards and Technology, 2023; Organization for Economic Co-operation and Development, 2024, 2022).

At a glance, the lifecycle appears structured, iterative, and well-governed. However, this representation reveals a critical limitation when viewed through a governance lens. Governance is typically applied at stages. Risk emerges between them.

⚠️ Why Evidence Architecture Requires Reconsideration

Most mature data privacy and data protection programs build on a strong evidentiary foundation. They maintain records of processing activities, conduct data protection impact assessments (DPIAs) or PIAs, document model behavior, and retain audit logs. These artifacts are designed to demonstrate compliance, support regulatory inquiries, and enable defensible decision-making. In stable, rules-based environments, this approach is effective. However, autonomous systems expose a critical limitation in this model. Evidence architecture is designed to explain what was intended and what was recorded. It is not designed to demonstrate how a system behaves over time under changing conditions. This distinction matters in practice.

A DPIA or PIA may accurately describe anticipated risks at deployment yet fail to capture how those risks evolve as the system adapts. Model validation may confirm acceptable performance at a specific point. However, it may not provide any visibility into current system behavior. Logs may record single events, but they may not show patterns that build up over time and cause harm. The European Union (EU) enforces this limitation in generative AI systems. The Italian Data Protection Authority concluded its investigation into OpenAI, with findings that raised concerns about the lawful basis, transparency, and the ability to explain how system outputs were generated. The case illustrates a broader regulatory expectation. Documentation describing system design and intended safeguards does not, on its own, demonstrate how a system functioned in practice.

For organizations, the implication is significant. Evidence architecture must support the reconstruction of system operation under real-world conditions. Organizations must disclose how outputs were produced and how safeguards were performed at the time of processing. Without this capability, organizations may be unable to demonstrate compliance, even where documentation appears complete. As a result, organizations can maintain extensive documentation and are still unable to answer a regulator’s most direct question: How did your system behave in practice, and how do you know your controls were effective?

This is where evidence architecture begins to break down. Not because it is insufficient in principle, but because it is anchored to a model of decision-making that assumes stability, traceability, and discrete events. Autonomous systems replace those conditions with continuous execution, adaptation, and pattern-based risk. To illustrate this divergence more concretely, Table 2 compares traditional governance assumptions with the operational realities of autonomous systems.

Table 2: Governance Assumptions and Operational Realities in Autonomous Systems

Dimension | Traditional Systems | Autonomous AI Systems | Governance Implication |

Decision Structure | Discrete, event-based | Continuous, evolving outputs | Accountability cannot be tied to a single moment |

Oversight Model | Periodic review | Continuous monitoring required | Static governance becomes insufficient |

Risk Emergence | Immediate and observable | Gradual and cumulative | Harm may only be visible through patterns |

System Behavior | Stable and predictable | Adaptive and dynamic | Controls must be continuously validated |

Traceability | Point-in-time reconstruction | Longitudinal reconstruction required | Evidence must capture behavior across time |

Source Note: This comparison reflects lifecycle risk management, continuous monitoring, and system observability challenges identified in contemporary AI governance frameworks (European Union, 2024; National Institute of Standards and Technology, 2023; Organization for Economic Co-operation and Development, 2024, 2022; Papagiannidis et al., 2025).

⚖️ The Evolving Burden of Proof

The burden of proof in data privacy and data protection has always required organizations to demonstrate compliance. In practice, organizations achieve this through documentation such as policies, records of processing, impact assessments, and audit artifacts. That approach is no longer sufficient on its own.

As autonomous and adaptive systems become more prevalent, regulators face a recurring challenge. Organizations can often explain how a system was designed, yet they struggle to demonstrate how it behaved at the time a decision was made or harm occurred. This gap reflects a broader shift from a documentation-based model of accountability to one that focuses on system functioning and control.

In 2026, the Federal Trade Commission announced a settlement with Air AI and its operators, resolving allegations that the company made deceptive claims about the capabilities and performance of its AI-driven business system. The case did not turn on how the system was designed or described in documentation. It turned on whether the company could substantiate how the system performed in real-world use. The implication is direct. Where organizations cannot demonstrate that system outputs align with their representations, liability may arise even in the absence of formal governance structures. The burden of proof is therefore shifting toward demonstrable system performance rather than documented intent.

Traditionally, legal and regulatory inquiry has been retrospective. Organizations were expected to explain what happened, identify the lawful basis, and point to safeguards. This model assumes that decisions are discrete and explainable. Autonomous systems weaken that assumption. As a result, the burden of proof is evolving toward what may be described as preventive accountability.

Under this approach, organizations must demonstrate not only that safeguards existed but also that they functioned effectively over time. In practical terms, this requires evidence that:

Governance controls operated effectively in practice, not only in design.

Intervention mechanisms were available and used when necessary.

Monitoring mechanisms could identify emerging risks.

System operation was subject to defined constraints.

This shift does not replace evidentiary accountability. It extends it. The focus is no longer limited to explaining past decisions. It includes demonstrating that systems were governed in a manner that reduced the likelihood of unlawful or harmful outcomes. For AI governance, data privacy, and data protection professionals, the implication is clear. Accountability must now be supported by continuous oversight, demonstrable control, and evidence of operational effectiveness, not documentation alone.

🧠 Real-Time Accountability and Control Plane Governance

The shift toward preventive accountability creates a practical challenge. If organizations must demonstrate that they govern systems over time, governance itself must operate continuously rather than at fixed points in the lifecycle. Most existing programs are not designed this way. They rely on periodic controls such as impact assessments at deployment, validation during testing, and scheduled audits. These mechanisms remain important. However, they are insufficient in environments where systems adapt, update, and produce continuous outputs. In such settings, risk does not wait for the next review cycle.

To address this gap, organizations are moving toward what can be described as “control-plane governance.” Control plane governance, as used in this article, refers to the continuous layer of technical and organizational controls that monitor, constrain, and manage system conduct during operation. The term comes from the architecture of distributed systems, where the control plane controls how systems work in real time. In the context of AI governance, it describes the mechanisms required to ensure that systems remain observable, bounded, and subject to intervention throughout their lifecycle. If accountability depends on demonstrating control over system behavior, oversight cannot be episodic. It must be embedded within system operation. Figure 3 illustrates this shift.

Figure 3: Human-in-the-Loop Oversight in Autonomous AI Systems

Source Note: Figure adapted to illustrate human-in-the-loop AI system architectures and iterative decision processes, consistent with principles of human oversight, continuous monitoring, and lifecycle risk management in contemporary AI governance frameworks (European Union, 2024; National Institute of Standards and Technology, 2023; Organization for Economic Co-operation and Development, 2024, 2022).

Before turning to the operational implications of sustained accountability, it is necessary to examine a model that underpins most organizational approaches to AI governance: the system lifecycle. The lifecycle model is widely accepted as a comprehensive framework for managing risk. It structures development, validation, deployment, and monitoring into defined stages, supported by iterative feedback loops. In practice, it forms the backbone of how AI governance, data privacy, and data protection controls are designed and applied.

Figure 4 builds on this limitation by illustrating the iterative nature of the AI lifecycle. Systems are continuously refined through feedback loops, with updates applied over successive cycles of development, deployment, and monitoring. This model assumes that iteration is sufficient to manage risk.

However, iteration is delayed. Risks are identified after they emerge, and improvements are applied in subsequent cycles. In continuously operating systems, this creates a timing mismatch. System performance evolves in real time. Governance responds in intervals. Figure 4 demonstrates why iteration alone cannot satisfy modern accountability requirements. Without continuous monitoring and control, risks can emerge and persist between life cycles.

Figure 4: AI Lifecycle Governance and the Transition from Iteration to Continuous Accountability

Source Note: Figure adapted to illustrate the AI system lifecycle, including development, validation, deployment, and monitoring stages, and to highlight governance implications related to iterative development and continuous oversight (European Union, 2024; National Institute of Standards and Technology, 2023; Organization for Economic Co-operation and Development, 2024, 2022).

The practical implication is clear. Governance models built around lifecycle stages and periodic iterations are insufficient for systems that operate continuously. As a result, accountability cannot depend solely on how systems are developed and updated. It must also account for how they behave between those moments.

🌐 Cross-Jurisdictional Convergence

A clear pattern is emerging across global legal and regulatory frameworks. While jurisdictions differ in legal structure and enforcement mechanisms, they are increasingly aligned on a common expectation: AI systems must be governed continuously, not just at the point of design or deployment. This convergence is visible across leading frameworks.

The EU’s AI Act establishes a risk-based model that requires ongoing monitoring, human oversight, and lifecycle risk management, particularly for high-risk systems. The NIST AI Risk Management Framework in the US stresses the importance of always finding, measuring, and reducing risks during the entire life cycle of AI systems. Similarly, the OECD’s AI Principles and related guidance reinforce accountability, robustness, and human-centered governance as ongoing obligations rather than one-time requirements. UNESCO’s global recommendations further emphasize the necessity of sustained oversight and responsibility in automated systems. Taken together, these frameworks reflect a shared shift in legal and regulatory thinking.

Governance is no longer viewed as a front-loaded activity. It is not sufficient to demonstrate that a system was responsibly designed, tested, and approved. Regulators are increasingly focused on how systems perform in practice. They want to know and understand how they behave under real-world conditions, how risks evolve, and how organizations respond to those changes.

For AI governance, data privacy, and data protection professionals, this convergence has practical consequences.

1. First, compliance expectations are becoming more consistent across jurisdictions, even where legal regimes differ. Organizations operating globally should expect similar scrutiny regarding monitoring, oversight, and control.

2. Second, lifecycle governance is becoming a minimum baseline, not a differentiator. Programs that rely solely on pre-deployment assessments or periodic reviews will increasingly fall short of regulatory expectations.

3. Third, accountability is being evaluated through outcomes and operational effectiveness.

The existence of policies or documented controls is no longer sufficient. Organizations must demonstrate that those controls functioned as intended under real-world conditions.

The direction of travel is clear. Across jurisdictions, governance is evolving from static assurance to operational accountability. Organizations that align with this shift will be better positioned to meet regulatory expectations and defend their systems under scrutiny.

📌 Key Takeaways

The analysis presented above reflects a structural evolution in how accountability is understood and operationalized.

Accountability is expanding from retrospective explanation to continuous oversight.

Autonomous systems require governance models that address behavior across time.

Effective governance requires real-time monitoring, constraint, and intervention capabilities.

Evidence architecture remains necessary but is no longer sufficient on its own.

Organizations must align legal, technical, and operational controls to maintain compliance.

Regulatory expectations are converging on lifecycle accountability.

Risk increasingly emerges through cumulative patterns rather than isolated decisions.

The ability to reconstruct system activity is critical for rights enablement and defensibility.

❓ Key Questions for Stakeholders

The shift toward accountability during system operation requires stakeholders to reassess whether their governance models can withstand real-world scrutiny. The following questions are designed to support that evaluation across functions.

1. Board and Senior Leadership:

· Are we treating AI governance as a strategic risk issue or as a technical implementation detail?

· Can we demonstrate that our AI systems remain controlled under real-world operating conditions, not just at deployment?

· Do we understand where autonomy exists in our systems and how it affects organizational risk?

2. Data Protection and Privacy Functions:

· Are our records of processing and impact assessments supported by evidence of how systems behaved in practice?

· Can we demonstrate that the lawful basis remains valid as system conduct evolves?

· Can we respond to access, objection, or contestation requests that require reconstruction of system behavior across time?

3. Engineering and Data Science:

· Can we explain how system outputs are generated under current operating conditions, not just at initial validation?

· How do we detect and respond to behavioral drift or unexpected outputs?

· What controls exist to constrain system operation after deployment?

4. Information Security and Risk Management:

· Are logging and telemetry sufficient to support both operational oversight and regulatory inquiry?

· Can our systems be paused, overridden, or adjusted in real time if risk thresholds are exceeded?

· Do our monitoring capabilities allow us to detect harmful patterns as they emerge, rather than after they occur?

5. Legal and Compliance:

· Are we prepared to defend system functionality under regulatory scrutiny, not just system design?

· Can we demonstrate that governance controls functioned effectively at the time a decision was made?

· Do our compliance frameworks account for continuous system operation rather than discrete decision events?

🔚 Conclusion

The evolution of agentic and autonomous AI systems is not simply about introducing new risks. It is redefining the conditions that establish accountability. Autonomous and agentic AI systems challenge the long-standing assumption that governance can rely on retrospective explanation. In doing so, they expose a deeper truth: accountability that depends on reconstruction is inherently fragile in environments defined by continuous change.

In this context, governance must evolve from documentation to design and from explanation to control. Organizations are no longer evaluated solely on what they can say about their systems, but on what they can demonstrate those systems are capable of doing. AI governance, data privacy, and data protection professionals, this shift is both structural and immediate. It requires moving beyond static compliance frameworks toward systems that are observable, constrained, and responsive in real time. It requires aligning legal, technical, and operational controls so that accountability is not implied but evidenced through behavior.

Looking ahead, this evolution will shape not only legal and regulatory expectations but also the credibility of organizational governance itself. As systems become more autonomous, the distinction between intention and outcome will narrow. What organizations design will matter less than what their systems do. The defining question for 2026 is no longer what happened. It is whether the organization could prevent what should not have happened and can prove it.

📜 References

European Union. (2016). Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/EC (General Data Protection Regulation) (Text with EEA relevance). https://eur-lex.europa.eu/eli/reg/2016/679/oj

European Union. (2024). Regulation (EU) 2024/1689 laying down harmonised rules on artificial intelligence and amending Regulations (EC) No. 300/2008, (EU) No. 167/2013, (EU) 2018/858, (EU) 2018/1139 and (EU) 2019/2144 and Directives 2014/90/EU, (EU 2016/797 and (EU) 2020/1828 (Artificial Intelligence Act) (Text with EEA relevance).https://eur-lex.europa.eu/eli/reg/2024/1689/oj

National Institute of Standards and Technology. (2023). NIST AI 100-1: Artificial Intelligence Risk Management Framework (AI RMF 1.0). https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.100-1.pdf

Organization for Economic Co-operation and Development. (2024). OECD AI Principles overview. OECD.AI GPAI. https://oecd.ai/en/ai-principles

Organization for Economic Co-operation and Development. (2022). OECD framework for the classification of AI systems. https://www.oecd.org/en/publications/oecd-framework-for-the-classification-of-ai-systems_cb6d9eca-en.html

Papagiannidis, E., Mikalef, P., & Conboy, K. (2025). Responsible artificial intelligence governance: A review and research framework. The Journal of Strategic Information Systems, 34(2), 101885. https://doi.org/10.1016/j.jsis.2024.101885

UNESCO. (2021). Recommendation on the Ethics of Artificial Intelligence. https://unesdoc.unesco.org/ark:/48223/pf0000381137

__________________________________________________________________________________

🌍 Country and Jurisdictional Highlights: March 1 through March 31, 2026

The developments highlighted below reflect more than regional legal and regulatory activity. Taken together, they illustrate how jurisdictions are operationalizing AI governance, data privacy, and data protection.

Across Africa, Asia-Pacific, Europe, the Middle East, North America, the United Kingdom, and Latin America, a consistent pattern is emerging. Regulators are moving beyond policy development and toward implementation, oversight, and enforcement. They are focusing less on whether governance frameworks exist and more on whether those frameworks function effectively in practice.

March 2026 highlights demonstrate that this shift is not uniform, but it is directionally aligned. Some jurisdictions are advancing formal legislative frameworks, while others are strengthening enforcement under existing laws and regulations. Moreover, they are embedding AI governance within broader cybersecurity, data protection, and digital infrastructure strategies. Despite these differences, the underlying expectation is increasingly consistent. Organizations must demonstrate how their systems operate, how they manage risks, and how they maintain accountability under real-world conditions.

These regional updates should therefore be read not only as individual developments but also as indicators of a broader transition. Governance is becoming operational, continuous, and evidence-based. Organizations that recognize these signals early will be better positioned to meet evolving regulatory expectations and to sustain defensible, explainable, responsible, and trustworthy systems.

________________________________________________________________________

🌍 Africa

📰Article 1 Title: Why African Countries are Using Data Protection Laws as a Backdoor to Regulate AI

🧭Summary: African governments are increasingly using existing data protection laws as a primary mechanism to regulate artificial intelligence, rather than waiting for standalone AI legislation. This approach reflects a pragmatic strategy in which rules governing personal data, automated decision-making, and cross-border transfers are expanded to address emerging AI risks across sectors such as credit scoring and digital lending.

🔗 Why it Matters: This trend signals that enforceable legal frameworks, rather than aspirational policy development, are operationalizing AI governance in Africa. Organizations operating in the region should expect regulators to apply existing data protection obligations to AI systems, particularly in areas involving automated decision-making, explainability, and fairness.

🔍Source:

📰Article 2 Title: Africa Stakes its Claim in Global AI Governance

🧭Summary: African governments are advancing coordinated AI governance efforts, including the adoption of the Africa Declaration on Artificial Intelligence and the establishment of an Africa AI Council to support implementation. These initiatives reflect growing participation in global AI standard setting and an effort to align national strategies with continental priorities across data, infrastructure, and governance.

🔗 Why it Matters: Africa is transitioning from fragmented national approaches to more coordinated and strategic AI governance frameworks, which will shape regulatory expectations across multiple jurisdictions. Organizations should anticipate greater alignment on governance principles, particularly regarding data use, accountability, and cross-border collaboration.

🔍Source:

📰Article 3 Title: Breaking Data Barriers: How Data Governance across Africa will Define the Continent’s Future

🧭Summary: African policymakers and industry stakeholders are increasingly treating data as strategic infrastructure, recognizing its central role in enabling AI development and economic growth. However, persistent fragmentation, data localization challenges, and a lack of interoperability continue to constrain the scalability of AI systems across the continent.

🔗 Why it Matters: This signals a shift toward governance models that balance data protection with data enablement, particularly in the context of regional trade and digital integration. Organizations must prepare for evolving requirements related to cross-border data flows, interoperability, and data-sharing frameworks that directly affect AI deployment.

🔍Source:

📰Article 4 Title: Africa’s Data Protection Reforms: A Continental Perspective on the Drivers of Change in Legal Frameworks

🧭Summary: This article traces recent data protection reforms in seven African countries, examining how Nigeria, Kenya, Angola, Ghana, Mauritius, Botswana, and others are reshaping their legal frameworks in response to AI, digital trade, and emerging technologies. It highlights how proposals such as Nigeria’s Data Protection (Amendment) Bill 2025 and Ghana’s new Data Protection Bill explicitly address AI, automated decision-making, privacy‑enhancing technologies, and strengthened data subject rights.

🔗 Why it Matters: The piece shows that Africa’s “second wave” of data protection reform is moving beyond GDPR mimicry toward more context‑specific regulation that embeds AI safeguards directly into core privacy laws. For policymakers and organizations, it signals a future in which compliance will require navigating hybrid frameworks that couple conventional data protection obligations with detailed, AI-specific rules on explainability, contestability, and human oversight.

🔍Source:

📰Article 5 Title: Bi-Monthly Update on Privacy in Africa (January & February 2026)

🧭Summary: This update reviews early‑2026 developments across African DPAs, including Burundi’s new data protection law, fresh guidelines in Egypt, law reform efforts in Mauritius and Tunisia, and the establishment of a data protection authority in the Republic of Congo. It also notes that countries such as Djibouti, Ghana, Kenya, Mauritius, Morocco, and Zimbabwe are actively advancing AI governance through strategies, regulatory proposals, and enforcement‑oriented guidance.

🔗 Why it Matters: The piece illustrates a decisive shift toward enforcement, with DPAs like Kenya’s using sanctions and decisions to drive better organizational governance and signal intolerance for serious violations. It also indicates that AI is no longer seen as a distant policy issue; instead, African regulators are incorporating AI risks into regular privacy supervision and participating in global initiatives such as the GPA’s joint statement on AI‑generated imagery.

🔍Source:

__________________________________________________________________________________

🌏 Asia-Pacific

📰Article 1 Title: Anthropic to Sign Deal with Australia on AI Safety and Economic Data Tracking

🧭Summary: Anthropic announced a deal with the Australian government to share economic and operational data about artificial intelligence deployment, including system capabilities and risk insights. The partnership also includes joint research, safety evaluations, and collaboration with universities to better understand AI’s impact on labor markets and economic systems.

🔗 Why it Matters: This development reflects a growing model of public-private governance, where governments rely on AI developers for real-time insight into system performance and risk. Organizations should expect increased expectations around transparency, reporting, and ongoing collaboration with regulators as part of operational accountability frameworks.

🔍Source:

📰Article 2 Title: Notes from the Asia-Pacific Region: NZ Government Releases Cybersecurity Strategy, Privacy Act Reform on the Table

🧭Summary: The New Zealand government released a national cybersecurity strategy alongside renewed consideration of reforms to the Privacy Act, reflecting increasing concern about data protection in AI-enabled environments. These developments link recent cybersecurity incidents and highlight the need for stronger coordination between privacy, security, and digital governance frameworks.

🔗 Why it Matters: This shift toward integrated governance models demonstrates that privacy, cybersecurity, and AI oversight are treated as interdependent rather than separate domains. Organizations should expect regulatory expectations that require coordinated controls across data protection, system security, and AI risk management.

🔍Source:

📰Article 3 Title: Asia Legal Enforcement and AI Update 2026

🧭Summary: Legal changes in Asia-Pacific show that AI governance is becoming stricter, with tougher labeling rules for generative AI in China, more scrutiny of India’s AI guidelines, and ongoing use of structured governance frameworks in Singapore.. These changes reflect a broader trend toward embedding AI oversight within existing data protection and regulatory systems.

🔗 Why it Matters: This highlights a regional shift toward operational enforcement, where regulators are focusing on how AI systems function in practice rather than solely on policy compliance. Organizations should prepare for increased audits, contractual obligations, and governance expectations tied directly to system performance and risk management.

🔍Source:

📰Article 4 Title: Asia’s AI Era

🧭Summary: This feature surveys how jurisdictions across Asia are racing to regulate AI, from China’s algorithmic and generative AI rules to Vietnam’s dedicated AI law and evolving soft‑law frameworks in places like Hong Kong and Singapore. It analyses common themes such as risk‑tiered obligations, transparency and accountability requirements, and the interplay between AI‑specific measures and existing data privacy and cybersecurity statutes.

🔗 Why it Matters: The article demonstrates that Asia is moving toward a patchwork of converging but distinct AI regimes, with some states favoring prescriptive controls and others relying on principles-based guidance anchored in data protection law. For companies operating region-wide, it highlights the growing need for adaptable governance structures capable of meeting both stringent content security-focused rules and more flexible, ethics-oriented expectations.

🔍Source:

📰Article 5 Title: What is Shaping Artificial Intelligence Governance Policies in Southeast Asia? An Analysis

🧭Summary: This analysis explores the political, economic, and security drivers shaping AI governance policies in Southeast Asia, including concerns about disinformation, labor market disruption, and strategic dependence on foreign technology providers. It compares how states such as Singapore, Indonesia, and Vietnam are blending digital‑economy ambitions with varying degrees of rights protection, risk management, and state control in their emerging AI frameworks.

🔗 Why it Matters: The article shows that AI governance in Southeast Asia is not just a technical regulatory project; it is closely linked to regime stability, developmental goals, and geopolitical alignment. For researchers and policymakers, it offers a nuanced view of why some governments prioritize innovation sandboxes and voluntary codes while others lean toward securitized, surveillance‑friendly approaches with significant implications for data protection.

🔍Source:

__________________________________________________________________________________

🌎 Caribbean, Central, and South America

📰Article 1 Title: UNESCO and CENIA Strengthen Partnership to Advance Ethical Artificial Intelligence with a Focus on Education in Chile and Latin America

🧭Summary: UNESCO and Chile’s National Center for Artificial Intelligence announced a regional partnership to promote ethical artificial intelligence development across Latin America, with a focus on education, capacity building, and governance. The initiative includes training programs, multi-stakeholder collaboration, and the deployment of regional tools such as Latam GPT to support responsible AI adoption.

🔗 Why it Matters: This signals a shift toward institutionalized AI governance frameworks grounded in ethics, education, and regional collaboration rather than isolated national policies. Organizations operating in Latin America should expect growing demands for responsible AI design, workforce training, alignment with human-centered governance principles, and compliance with LGPD data-handling requirements.

🔍Source:

📰Article 2 Title: Data Protection in Latin America: Insights and Perspectives from Regulators

🧭Summary: Regulators from Brazil, Argentina, and Ecuador highlighted increased cooperation through the Ibero American Network of Data Protection Authorities, including efforts to harmonize enforcement approaches and share intelligence on emerging risks such as artificial intelligence and cybersecurity. The initiative includes developing a regional observatory to monitor trends, enforcement priorities, and cross-border data protection issues.

🔗 Why it Matters: This demonstrates that data protection enforcement in Latin America is becoming more coordinated and intelligence-driven rather than purely national in scope. Organizations should expect greater consistency in regulatory expectations and increased scrutiny of cross-border data processing and AI-driven decision-making.

🔍Source:

📰Article 3 Title: Digi Americas LATAM CISCO Insights

🧭Summary: This March 2026 bulletin brings together important updates on digital policy and data governance in the Americas, including how the upcoming 2026 review of USMCA could change rules on digital trade and data flows that affect Mexico and its regional partners. It also flags emerging debates over AI governance and cross‑border data regimes that could influence how Latin American countries balance openness with data sovereignty and privacy concerns.

🔗 Why it Matters: The newsletter covers more than just AI; it places Latin American data protection and AI governance within changing discussions about trade and interoperability, showing how commercial agreements can make privacy and AI rules a permanent part of regional integration. For researchers, it offers early indicators of how renegotiated trade provisions may interact with domestic data protection laws and AI strategies in Mexico and neighboring states

🔍Source:

📰Article 4 Title: Beyond Data: The New Mandate of the Modern CDO in Latin America in the Age of AI

🧭Summary: A March 2026 analysis highlights that while nearly half of organizations in Latin America have adopted artificial intelligence, only a small proportion are generating measurable business value due to governance, leadership, and integration challenges. The findings emphasize that many organizations remain in early or experimental stages of deployment, with limited operational maturity in managing AI systems.

🔗 Why it Matters: This highlights that the primary barrier to effective AI deployment in the region is not technology, but governance capability and organizational alignment. Organizations must strengthen leadership accountability, data governance, and operational oversight to transition from experimentation to scalable, compliant AI deployment.

🔍Source:

📰Article 5 Title: How Rules for Publicly Available Data are Shaping the Future of AI

🧭Summary: This article examines how different jurisdictions regulate the use of publicly available personal data for training AI systems, focusing on when such collection can rely on “legitimate interests” and when it triggers heightened privacy and transparency obligations. It contrasts more innovation-friendly regimes with stricter approaches that treat large-scale scraping and reuse of public data as inherently high-risk, with implications for global AI development.

🔗 Why it Matters: The piece is directly relevant to Latin America and the Caribbean because many countries in the region are updating or interpreting their data protection laws to address AI training practices that rely heavily on social media, news, and other open datasets. For policymakers and organizations, it shows how their regulation of “publicly available” data will strongly affect local AI ecosystems, including which models can be developed domestically and how cross-border AI services may operate.

🔍Source:

__________________________________________________________________________________

🇪🇺 European Union

📰Article 1 Title: Governance and Enforcement Structure of the AI Act

🧭Summary: In this working group paper, the EDPS explains how the EU AI Act’s governance and enforcement architecture should function, arguing that data protection authorities are natural regulators for many high-risk AI systems because of their experience enforcing fundamental-rights-based rules under the GDPR. The remarks highlight the multiple intersections between the AI Act and EU data protection law, including the use of special‑category data for bias detection, the need for coordinated fundamental rights and data protection impact assessments, and forthcoming joint EDPB–Commission guidelines on the AI Act–GDPR interplay.

🔗 Why it Matters: The speech clarifies how AI oversight in the EU will be operationalized in practice, with DPAs playing a central role in supervising AI systems that process personal data, rather than governance being siloed in separate “AI‑only” bodies. For organizations, this means AI compliance will be checked against both the AI Act and the GDPR, which require governance structures, documentation, and risk assessments that meet the needs of both laws simultaneously.

🔍Source:

📰Article 2 Title: GDPR Enforcement: How EU Regulators are Shaping AI Governance

🧭Summary: This article argues that, even before the AI Act becomes fully operational, GDPR enforcement has functioned as the EU’s de facto horizontal AI governance framework, with DPAs investigating and sanctioning AI‑enabled biometric identification, facial recognition, profiling, and model‑training practices that breach core principles. It also discusses how EU‑level guidance, such as the EDPB’s opinions on AI models and the statement on DPAs’ role in the AI Act framework, clarifies that complex or opaque AI does not reduce obligations around lawfulness, transparency, and data subject rights.

🔗 Why it Matters: The piece demonstrates that AI governance in the EU is not starting from scratch with the AI Act but is deeply rooted in an evolving body of GDPR case law, guidance, and coordinated enforcement. For organizations, it highlights that AI-related risk management must be integrated into data protection programs now, while also tracking impending changes from the Commission’s “Digital Omnibus” proposal, which would adjust GDPR procedures in light of AI-driven processing

🔍Source:

📰Article 3 Title: CDT Europe’s AI Bulletin: March 2026

🧭Summary: In March 2026, the European Commission published a new draft of the Code of Practice for general-purpose AI models. This draft aims to make compliance easier while keeping transparency, safety, and accountability requirements. The draft shows that the EU AI Act is being implemented by translating general rules into practical guidance.

🔗 Why it Matters: This shows a shift from laws to practical compliance tools, where organizations must show how they put governance requirements into practice in real systems. Companies developing or deploying general-purpose AI models should anticipate increasing expectations around documentation, transparency, and ongoing risk management.

🔍Source:

📰Article 4 Title: The First Warning Came Long Ago: Non-EU Companies Still Didn’t Listen

🧭Summary: In March 2026, a €525,000 GDPR fine against Locatefamily.com reinforced that non-EU companies remain subject to EU data protection law when processing data of EU residents. The case highlights enforcement against organizations that fail to appoint EU representatives or comply with core governance obligations.

🔗 Why it Matters: This confirms the continued expansion of extraterritorial enforcement, where jurisdiction is determined by data subjects rather than organizational location. Organizations deploying AI systems globally must ensure that governance frameworks account for EU obligations even when operations are based outside the region.

🔍Source:

📰Article 5 Title: Generative AI and GDPR Enforcement in Europe: A Lot of Noise, One Fine, Zero Survivors

🧭Summary: In March 2026, the Court of Rome annulled a €15 million fine previously imposed on OpenAI by Italy’s data protection authority, citing deficiencies in the regulator’s legal reasoning. The decision shows that courts are increasingly scrutinizing how AI-related enforcement actions justify themselves under GDPR principles.

🔗 Why it Matters: This highlights that AI enforcement in Europe is entering a more mature phase, where decisions must withstand legal challenge and evidentiary scrutiny. Organizations should expect that both compliance obligations and enforcement actions will be evaluated against rigorous standards of legal and technical justification.

🔍Source:

__________________________________________________________________________________

🌍 Middle East

📰Article 1 Title: Cybersecurity Implications of the 2026 Middle East Escalation: When Cloud Infrastructure Becomes a Target

🧭Summary: A March 2026 report details how military escalation in the Middle East resulted in physical and cyber-attacks on cloud infrastructure, including data centers supporting AI and enterprise systems. The incident disrupted services across multiple sectors and demonstrated how AI-dependent systems can be directly impacted by geopolitical events.

🔗 Why it Matters: This represents a shift in risk modeling for AI governance, where system reliability and data availability are no longer purely technical concerns but also geopolitical ones. Organizations must incorporate infrastructure resilience, data continuity, and cross-region failover strategies into their AI and data protection frameworks.

🔍Source:

📰Article 2 Title: Data Protection Update: March 2026

🧭Summary: A March 2026 legal update highlights increasing enforcement activity across Middle Eastern jurisdictions, including Saudi Arabia, where authorities are strengthening penalties and requiring enhanced cybersecurity controls to protect personal data. The report also notes growing regulatory expectations tied to AI-driven processing and the need for stronger governance frameworks.

🔗 Why it Matters: This signals that data protection regimes in the Middle East are moving from policy development to active enforcement, particularly where AI systems process sensitive or large-scale data. Organizations should expect greater scrutiny of security controls, risk management practices, and the operational effectiveness of governance frameworks.

🔍Source:

📰Article 3 Title: UAE Data Protection Law: Complete Guide to PDPL (2026)

🧭Summary: This guide explains the scope and operation of the UAE’s Federal Decree‑Law No. 45 of 2021 on the Protection of Personal Data (PDPL), emphasizing its broad jurisdiction, GDPR‑inspired principles, and the now‑fully‑operational role of the UAE Data Office. It details practical requirements, such as records of processing, privacy-by-design, DPIAs for AI and profiling projects, and the interaction between the PDPL and the new Federal Decree-Law No. 26 of 2025 on Child Digital Safety.

🔗 Why it Matters: The piece turns a more developed legal framework into practical steps that are directly relevant for AI governance, especially when automated profiling, large-scale surveillance, or children’s data are involved. For organizations in the UAE and beyond, it indicates that strong DPIA practices, clear notices, and technical safeguards are now expected and verifiable, making PDPL compliance a key part of any AI deployment strategy in the country.

🔍Source:

📰Article 4 Title: Data Security and Compliance Risk: 2026 Forecast Report

🧭Summary: This post summarizes findings from a 2026 Kiteworks report on data security and compliance risk in the Middle East, noting that organizations in the region show high awareness of data sovereignty requirements but often lack the technical controls and joint playbooks needed to enforce them. It highlights that regulations such as Saudi Arabia’s PDPL and the UAE PDPL are reshaping operations, yet boards remain exposed when anomaly detection, kill switches, and model lifecycle governance for AI vendors are not fully implemented.

🔗 Why it Matters: The piece captures a key governance gap: regulation around data sovereignty and AI‑related risk has advanced faster than many organizations’ operational capabilities. For risk and compliance teams, it shows that Middle Eastern regulators are moving from awareness to seeking real proof of technical and organizational controls for AI, cloud, and cross-border data use.

🔍Source:

📰Article 5 Title: Data Protection & Privacy 2026

🧭Summary: This jurisdictional practice guide explains how the UAE’s data protection landscape has evolved into a multi-layered system, with the Federal Decree-Law No. 45 of 2021 (PDPL) complemented by free-zone regimes such as DIFC and ADGM that closely mirror GDPR-style principles. It describes the main duties of controllers and processors, the enforcement powers of the UAE Data Office and free-zone authorities, and the practical requirements for consent, transparency, cross-border transfers, and data subject rights that now shape digital and AI-driven services.

🔗 Why it Matters: The guide is a concise yet authoritative snapshot of how “hard law” privacy obligations now structure data governance and AI deployment in one of the region’s most significant digital hubs. For organizations building or scaling AI systems in or through the UAE, it clarifies that compliance must be architected to accommodate overlapping federal and free-zone regimes, each with its own expectations for records of processing, DPIAs, and accountability documentation.

🔍Source:

__________________________________________________________________________________

🌎 North America

📰Article 1 Title: U.S. Data Privacy Laws and Regulations in 2026

🧭Summary: This article surveys the expanding landscape of US comprehensive state privacy laws, noting that three new statutes take effect in Indiana, Kentucky, and Rhode Island in 2026 while additional regulatory updates roll out in California, Connecticut, Oregon, and Utah. It pays particular attention to California’s evolving regime, where new California Privacy Protection Agency regulations will require risk assessments and cybersecurity audits for certain businesses, and the Delete Act will introduce a centralized deletion system for data brokers from August 2026.

🔗 Why it Matters: The piece illustrates that US data protection is increasingly being driven by state‑level experimentation, with special focus on minors’ data, automated decision‑making, data‑broker transparency, and enhanced consumer rights. For companies handling personal data across multiple states, the situation underscores the need for unified yet flexible governance frameworks that address varying definitions of sensitive data, opt-out mechanisms, and AI-related risk-assessment duties.

🔍Source:

📰Article 2 Title: Privacy New Roundup | March 2026

🧭Summary: This roundup reports that the Office of the Privacy Commissioner of Canada (OPC) has updated its guidance to explicitly exclude neural data from the category of sensitive personal information, contrasting this guidance with several US states that have recently classified neural data as sensitive in response to neurotechnology use. It also notes political changes in Canada that have sidelined Bill C-27’s reforms for now, while Bill C-4, passed on 12 March 2026, controversially grants federal political parties immunity from certain privacy obligations.

🔗 Why it Matters: The piece highlights emerging transnational divergences in how North American jurisdictions conceptualize and regulate novel data types, such as neural data, with direct consequences for AI and neurotechnology governance. For researchers and policymakers, it shows that Canadian privacy reform is changing and that discussions about data sovereignty, children’s privacy, and political-party exemptions will shape the future of AI and data protection in the country.

🔍Source:

📰 Article 3 Title: Data Privacy, AI Regulatory, and Compliance Update: 2026

🧭Summary: This client alert places North American developments in a global AI and privacy context, highlighting new US measures like Utah’s App Store Accountability Act, which will be fully compliant by May 2026, and new profiling rules under various state privacy laws. It argues that organizations must anticipate overlapping regimes, from US state privacy laws to the EU’s general-purpose AI (GPAI) Code of Practice, by unifying their privacy and AI compliance approaches and ensuring consistent rights-handling and documentation

🔗 Why it Matters: The article underscores that data privacy and AI governance can no longer be run as separate silos; regulators are increasingly examining how AI systems respect underlying privacy rights and transparency duties. For North American entities with cross-border operations, it reinforces the urgency of building integrated, operational compliance programs that can withstand scrutiny from both US regulators and foreign authorities enforcing AI and data-protection norms.

🔍Source:

📰Article 4 Title: Data Protection in the United States

🧭Summary: This updated country chapter explains how the US data‑protection landscape in 2026 now features a broad set of comprehensive state privacy laws layered over sector‑specific federal statutes, with enforcement of updated CCPA regulations carried out by the California Privacy Protection Agency. It notes that 2025 regulatory changes introduced new rules on cybersecurity audits, risk assessments, and automated decision-making technology, and that these are now in effect as companies implement AI-enabled processing.

🔗 Why it Matters: The chapter provides an authoritative reference point for understanding how AI‑related obligations sit within the broader US privacy patchwork, particularly regarding automated decision‑making and enhanced accountability requirements. For global organizations, it clarifies that US compliance now requires aligning AI governance practices not only with EU‑style rules abroad but also with changing state requirements at home that are backed by enforcement.

🔍Source:

📰Article 5 Title: Fasken’s Noteworthy News: Privacy & Cybersecurity in Canada, the US, and the EU (March 2026)

🧭Summary: On March 12, 2026, the Government of Canada introduced Bill C-22, proposing a renewed lawful access framework that would expand authorities’ ability to obtain digital data from service providers. The same period also saw a new finding by the Office of the Privacy Commissioner addressing data retention and anonymization practices in consumer loyalty programs.

🔗 Why it Matters: These developments signal that Canadian data protection is evolving through both legislative reform and enforcement interpretation, particularly in areas involving access to personal data and retention obligations. Organizations must reassess data governance frameworks to ensure compliance with evolving regulatory expectations on law enforcement access, anonymization, and accountability.

🔍Source:

__________________________________________________________________________________

🇬🇧 United Kingdom

📰Article 1 Title: Artificial Intelligence | UK Regulatory Outlook: March 2026

🧭Summary: This regulatory outlook explains the UK government’s 18 March 2026 report on copyright and AI, confirming that there will be no immediate changes to copyright law and that a broad text-and-data-mining exception with an opt-out is no longer the preferred option. It outlines proposed work to make AI-generated content more transparent and better labeled, possibly removing copyright protection for works created entirely by computers, exploring new protections against digital replicas and impersonation, and continuing to rely on licensing and enforcement within the existing framework.

🔗 Why it Matters: The piece shows that the UK is pursuing a “pro‑innovation” but rights‑conscious approach to AI governance, using copyright, transparency, and labeling levers rather than a single omnibus AI statute. For organizations deploying generative AI in the UK, it highlights growing expectations for training data transparency, provenance, and labeling standards, as well as safeguards against harmful impersonation, all of which must be integrated with existing data protection obligations.

🔍Source:

📰Article 2 Title: Why Data Protection Lies at the Heart of Responsible Police Use of Facial Recognition Technology

🧭Summary: In March 2026, the UK Information Commissioner’s Office emphasized its active scrutiny of police use of facial recognition technology to ensure compliance with data protection law. The regulator highlighted concerns related to proportionality, accuracy, and the lawful use of biometric data in operational deployments.

🔗 Why it Matters: This demonstrates that enforcement in the UK is increasingly focused on how AI systems function in practice, particularly in high-impact public sector use cases. Organizations that use biometric or surveillance technologies should expect that a rigorous evaluation will assess the lawful basis, safeguards, and the system's real-world performance.

🔍Source:

📰Article 3 Title: Data Bytes 64: Your UK and European Data Privacy Update

🧭Summary: The ICO issued updated guidance that requires organizations to set up formal processes for handling data protection complaints before the new rules under the Data Use and Access Act take effect. The guidance clarifies expectations for accessibility, documentation, and responsiveness in handling data subject concerns

🔗 Why it Matters: This signals a move toward procedural accountability, where organizations must demonstrate that governance processes function effectively in practice, not just in policy. Companies should prepare for increased scrutiny of complaint handling, documentation, and operational responsiveness as part of broader expectations.

🔍Source:

📰Article 4 Title: Agentic AI: The ICO’s Early Signals on Data Protection Risk

🧭Summary: A March 2026 analysis highlights the ICO’s emerging focus on agentic AI systems, including their ability to act autonomously and interact with external tools and data sources. The report identifies risks related to automated decision-making, transparency, and compliance with core UK GDPR principles.

🔗 Why it Matters: This demonstrates that UK regulators are proactively addressing next-generation AI risks, particularly those associated with autonomous system behavior. Organizations deploying advanced AI systems should expect increasing expectations regarding monitoring, explainability, and control over system operation.

🔍Source:

📰Article 5 Title: Tech Companies Operating in the UK Told to Make Sure Their Age Assurance Works

🧭Summary: This news update reports that the UK government has launched a three‑month consultation on online child‑safety reforms, including a potential ban on social media for under‑16s, restrictions on livestreaming and location‑sharing, and tighter controls on AI chatbots and gaming platforms. It notes that the proposals contemplate raising the digital age of consent above 13, introducing age checks even for VPN use, and applying the new rules to services that rely on end‑to‑end encryption.

🔗 Why it Matters: The piece indicates that the UK is moving toward a much more interventionist stance on children’s data and AI-mediated online environments, which will have direct implications for data protection compliance and the design of AI-driven features. For platforms and app providers, it means that the lawful processing of minors’ data will increasingly depend on strong age assurance, profiling controls, and governance over how AI systems are used in engagement and safety mechanisms.

🔍Source:

__________________________________________________________________________________

Comments